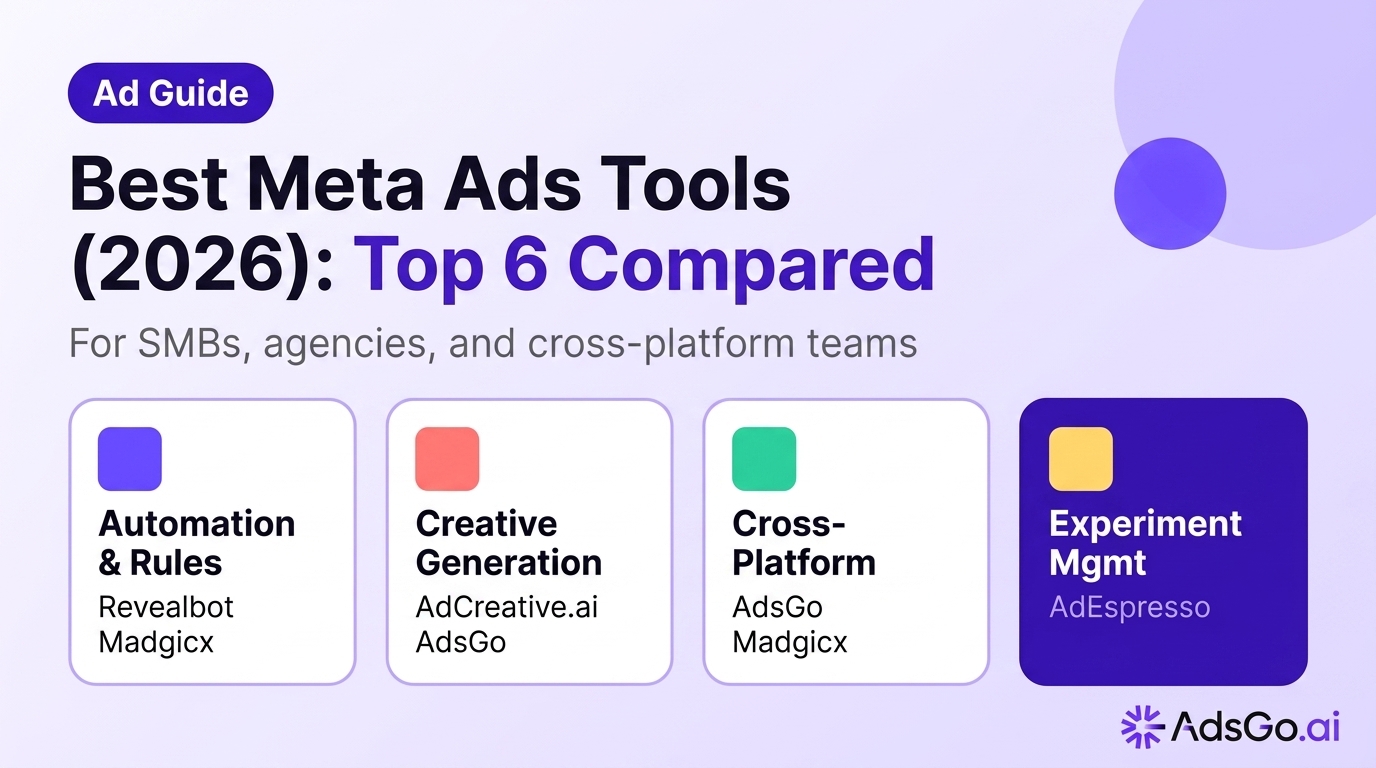

Meta campaigns reward iteration speed: the faster you test audiences, budgets, and creative, the faster you find a scalable CPA. The best Meta Ads tools in 2026 are not the ones with the longest feature lists — they are the ones that match how you decide (rules vs ML vs native Advantage+), how you measure after iOS signal loss, and whether you need Meta-only depth or a deliberate Google + Meta roadmap.

What are Meta Ads tools?

Meta Ads tools are third-party software products that extend or automate what you can do inside native Meta Ads Manager. The category covers four distinct jobs — and most tools only do one of them well:

| Job | What it does | Example tools |

|---|---|---|

| Automation & rules | Scheduled bid/budget changes, if-then rules, alerts | Revealbot, Madgicx |

| Creative generation | AI-generated copy, images, video scripts, variant testing | AdCreative.ai, AdsGo Auto Creative |

| Cross-platform management | Google + Meta reporting and budget allocation in one view | AdsGo |

| Experiment management | Structured A/B tests, publishing workflows | AdEspresso |

The critical distinction: these tools sit on top of Meta's own AI (Advantage+, Advantage+ Shopping Campaigns). They do not replace it — they augment what Meta's automation can't see (cross-channel context, creative supply, rule-based guardrails) or reduce the manual work around it.

Comparing Meta tools but running Google Ads too?

Why Meta Ads tools matter in 2026

Three structural shifts in 2026 have made native Ads Manager harder to manage alone:

1. Advantage+ delegates more decisions to Meta's AI Meta keeps pushing Advantage+ placements, audiences, and creative treatments that delegate auction decisions to system ML. That's not "lazy media buying" — it means your job is now measurement quality, creative supply, and guardrails. If you're still micro-managing placements while conversion events are dirty, you're polishing the wrong surface.

2. iOS signal loss changed what's reliably measurable Post-ATT, modeled conversions and aggregated reporting are normal. If your tool only shows last-click ROAS, you're optimizing the wrong outcome. Triangulate incrementality, cohorts, and offline signals — or expect scaling decisions that look brave on paper and fail in finance.

3. Cross-channel complexity demands a unified view Most advertisers now run Meta and Google simultaneously. Each platform reports its own ROAS as "the truth." Without a cross-channel layer, budget allocation decisions happen in spreadsheets after two exports — costing hours and introducing allocation errors.

When native Meta tools aren't enough:

- You run Meta and Google and need one weekly P&L narrative

- Creative throughput (not bids) is the performance bottleneck

- Creative fatigue hits before budget caps do

- Your team spends more time reconciling dashboards than making decisions

Best Meta Ads tools (2026) — quick comparison

Six tools cover the practical range for most Meta advertisers — from SMB automation to enterprise creative ops:

| Tool | Best for | Automation style | Starting price |

|---|---|---|---|

| AdsGo | Google + Meta cross-platform teams | AI-assisted budget + creative drafts | Free starter |

| Madgicx | Dense Meta operator stack | Rules + ML hybrid | ~$49/mo |

| Revealbot | Transparent rule-based automation | Explicit if-then rules | ~$99/mo |

| AdEspresso | Structured A/B experiments | Experiment workflows | ~$49/mo |

| AdCreative.ai | Creative volume at scale | Generative AI creative | ~$21/mo |

| Hootsuite Ads | Enterprise social governance | Org-wide approval workflows | Custom |

The short answer: match tool to your bottleneck. Need automation rules → Revealbot. Need creative volume → AdCreative.ai. Need Meta-first depth → Madgicx. Running Google + Meta together → AdsGo.

Meta Ads tools by use case (2026)

Best Meta Ads tools for SMBs

For most small businesses spending $1k–$5k/month on Meta, native Ads Manager plus disciplined creative rotation is often enough. Add a third-party tool when:

- Creative throughput breaks before budget does

- You run Meta and Google as one P&L and need one weekly narrative

- You want AI-assisted drafts and budget suggestions without maintaining two consoles

Best SMB picks:

| Spend level | Recommended tool | Why |

|---|---|---|

| Under $1k/month | Native Meta Ads Manager | Zero cost, all core functionality |

| $1k–$3k/month | Native + AdCreative.ai | If copy or design is the bottleneck |

| $3k–$10k/month | AdsGo or Revealbot | Cross-platform or rule-based automation |

Do not add a third-party tool until pixel health, key events, and creative rotation are stable. Tools solve process problems, not measurement problems.

Best Meta Ads automation tools

Automation tools execute rule-based or ML-driven changes — bid adjustments, budget shifts, pauses, and alerts — without manual intervention.

Revealbot — best for transparent, rule-based automation

- Explicit if-then logic: "if CPA > $X for 3 days, pause ad set"

- Slack-visible change notifications — full transparency

- Strong for teams that want to see and approve every automated action

- Not a creative ideation suite — pair with AdCreative.ai if creative is also a bottleneck

- Best when: your organization wants code-like rules and alerts over opaque ML

Madgicx — best for dense Meta-native automation

- Maximum Meta control surfaces: scaling playbooks, audience tools, bid rules

- ML-assisted optimization alongside manual rule layers

- Dense dashboard designed for experienced Meta operators

- Cross-platform alignment still requires a separate layer

- Best when: Meta is your center of gravity and you have senior staff to own the rule stack

Advantage+ (native) — when it's enough

- Meta's built-in automation handles delivery decisions when events are clean and creative supply is steady

- No additional cost; integrates natively with all Meta surfaces

- Loses effectiveness when measurement is thin or creative stalls

- Best when: under $5k/month with clean pixel data and 3+ fresh creatives

Best Meta Ads creative tools

Creative tools generate, test, or manage ad copy, images, and video variants at speed — addressing the most common scaling bottleneck for Meta advertisers.

AdCreative.ai — best for creative volume at scale

- Rapidly generates creative variants for feed ads, stories, and carousels

- Reduces time-per-variation vs manual design by 70–80%

- Strong when you need distinct angles to exit audience overlap or fight fatigue

- Policy and brand review still mandatory — AI speed is not compliance

- Best when: creative supply is the bottleneck and conversion tracking is already trustworthy

- Pricing: from ~$21/month

AdsGo Auto Creative — best for integrated creative + campaign workflow

- AI-generated campaign drafts inspired by what already runs

- Creative generation and refresh in one workflow with campaign publishing (Drafts & Recom)

- Reduces tool sprawl: creative + campaign management + cross-channel reporting in one place

- Best when: you want creative generation without maintaining a separate design tool

- See: Auto Creative

AdEspresso — best for structured creative experiments

- Clear A/B experiment framing for creative hypothesis testing

- Good when hypotheses are already defined and you need systematic test structure

- Best when: standardizing tests across teams; publishing discipline matters more than ML novelty

For a deeper guide on creative fatigue timing and rotation cadence, see how to reduce Facebook ad creative fatigue.

Best Meta Ads tools for agencies

Agencies have different priorities than in-house teams: auditability, repeatability, client-ready reporting, and change transparency matter as much as marginal CPA.

What agencies need from Meta Ads tools:

- Change logs — who changed what, when, and why

- Approval workflows — client sign-off before changes go live

- Multi-account management — scale across clients without per-account manual work

- White-label reporting or clear attribution of automated actions

Best agency picks:

| Need | Tool | Why |

|---|---|---|

| Rule-based automation with audit trail | Revealbot | Explicit rules, Slack alerts, change history |

| Multi-account dashboard + rules | Madgicx | Dense Meta controls across accounts |

| Org-wide approval workflows | Hootsuite Ads | When social governance is already Hootsuite |

| Cross-platform client reporting | AdsGo | One view when clients run Google + Meta |

Agencies: optimize for auditability first, ROAS second. Clients fire you when they can't explain what happened — not when ROAS dips for a week.

Cross-platform Meta + Google tools

When you run Meta and Google simultaneously, native tools in each platform only answer half the question. The week you can't tell finance "which channel earned the next $1,000" is the week you need a cross-channel layer.

AdsGo — best for Google + Meta as one operating system

- One dashboard for both channels: spend, ROAS, creative performance

- AI-assisted budget allocation across Google and Meta — not siloed per platform

- Structured launch workflows reduce setup drift across campaigns, regions, teammates

- Creative generation and campaign publishing reduce tool sprawl

- Starter plan free; see pricing

Best when:

- Finance asks how Meta and Google interact in one number

- Weekly budget decisions require two CSV exports and two meetings

- You want fewer tools, not more

Madgicx — partial cross-platform

- Meta-first with some Google modules

- Validate Google coverage for your specific use case before purchase

- Best when Meta is the center of gravity and Google is secondary

When you don't need a cross-channel tool:

- You only run Meta (no Google)

- Native exports and a shared spreadsheet are enough for your reporting cadence

- Budget decisions stay within one platform

Meta Ads tools comparison table

| Tool | Automation | Creative | Cross-platform | Agency-ready | Pricing |

|---|---|---|---|---|---|

| AdsGo | AI-assisted budget + drafts | Auto Creative + editable drafts | Google + Meta | Yes | Free starter |

| Madgicx | Rules + ML hybrid | Paired separately | Meta-first | Partial | ~$49/mo |

| Revealbot | Explicit if-then rules | Not creative-first | Meta-only | Yes (audit trail) | ~$99/mo |

| AdEspresso | Experiment workflows | Asset variants | Meta-only | Yes (experiments) | ~$49/mo |

| AdCreative.ai | Not a bid tool | Generative volume | Not applicable | Partial | ~$21/mo |

| Hootsuite Ads | Social org workflows | Linked assets | Social-wide | Yes (governance) | Custom |

Which Meta Ads tool should you use?

Use this decision framework to find the right tool in under 2 minutes:

If you are an SMB (under $5k/month):

- Creative is your bottleneck → AdCreative.ai

- You also run Google Ads → AdsGo

- You need rules and alerts → Revealbot (lite)

- You need nothing yet → native Ads Manager + clean pixel

If you are scaling ($5k–$50k/month):

- Meta-first, need dense controls → Madgicx

- Google + Meta, need unified view → AdsGo

- Rules + transparency are critical → Revealbot

- Creative volume is the ceiling → AdCreative.ai + any bid tool

If you are an agency:

- Need audit trail + client-facing rules → Revealbot

- Multi-account Meta ops → Madgicx

- Clients run Google + Meta → AdsGo

- Org already on Hootsuite → Hootsuite Ads

If you are an enterprise / DTC at scale:

- Catalog + creative ops at scale → Smartly.io or AdsGo

- Portfolio governance across markets → Skai

- Dense Meta + Google integrated → AdsGo

Full scorecard:

| Profile | Primary pain | Recommended tool |

|---|---|---|

| SMB, Meta-only, clean pixel | Creative throughput | AdCreative.ai |

| SMB, Google + Meta | Fragmented reporting | AdsGo |

| Scaling brand, Meta-first | Overlap + governance | Madgicx |

| Scaling brand, both channels | Cross-channel P&L | AdsGo |

| Agency, transparency-first | Audit trail, change logs | Revealbot |

| Agency, multi-account Meta | Repeatability + rules | Madgicx |

| Enterprise, governance | Multi-market approvals | Skai |

| Enterprise, retail creative | Feed + creative ops | Smartly.io |

When NOT to use Meta Ads tools

Most comparison articles skip this section. Knowing when a tool won't help is as important as knowing what it does.

Do not add a Meta Ads tool when:

- Conversion tracking is broken — AI will optimize the wrong objective faster; fix pixel, CAPI, and key events first

- You have under $1,000/month in spend — tooling ROI is marginal; invest in creative production instead

- You already have two optimization systems running simultaneously — consolidate before adding a third

- The bottleneck is landing page quality or offer-market fit — no automation tool fixes a broken checkout

- You need legal review on every creative claim — generative AI speed is not compliance

Tool-specific "do not use" cases:

- Revealbot — do not use when you need creative ideation; it's rules and alerts only

- Madgicx — do not use when Google is your primary channel and Meta is experimental; you'll pay for Meta depth you underuse

- AdCreative.ai — do not use when landing pages or measurement are broken; new assets scale the leak

- AdsGo — do not use when you will never run Google and want the leanest possible Meta-only seat

- AdEspresso — do not use when you need bid optimization beyond experiment structure; validate Hootsuite packaging for your tier

- Hootsuite Ads — do not use when performance speed matters more than approval governance

The most common failure: buying a tool to solve a measurement problem. No Meta Ads tool fixes bad pixel data, missing CAPI, or a checkout with 60% drop-off.

Running Meta and Google from two separate dashboards? AdsGo manages both channels in one workspace — AI optimization, creative drafts, and budget allocation included. Starter plan free. → Try AdsGo free

Pricing & budget guide (2026)

When does a paid Meta Ads tool earn its cost? Here's the spend-stage breakdown:

| Monthly Meta spend | Recommended approach | When to add tools |

|---|---|---|

| Under $1,000 | Native Meta Ads Manager only | Not yet — focus on conversion tracking and creative |

| $1,000–$3,000 | Native + one creative tool if design is the bottleneck | Only if creative production takes >5 hours/week |

| $3,000–$10,000 | Cross-platform (AdsGo) or rule-based (Revealbot) | When weekly reviews miss problems, or you're cross-platform |

| $10,000–$50,000 | Dedicated creative pipeline required | Dense Meta stack (Madgicx) or AI-assisted cross-channel (AdsGo) |

| $50,000+ | Custom stack or enterprise tooling | Prioritize measurement integrity and governance over dashboards |

Tool pricing snapshot (2026 estimates — verify on vendor sites):

| Tool | Entry price | Free tier |

|---|---|---|

| AdsGo | Free starter plan | Yes |

| Madgicx | ~$49/month | No |

| Revealbot | ~$99/month | Trial only |

| AdEspresso | ~$49/month | Trial only |

| AdCreative.ai | ~$21/month | Trial only |

| Hootsuite Ads | Custom | No |

Most tools don't fail — they're solving the wrong problem at the wrong budget stage. If budget allocation across campaigns is the pain, start from how to reduce Facebook Ads cost before buying another seat.

FAQ

What are the best Meta Ads tools in 2026?

The best Meta Ads tools depend on your bottleneck. For transparent automation: Revealbot. For dense Meta controls: Madgicx. For creative volume: AdCreative.ai. For Google + Meta in one view: AdsGo. For structured experiments: AdEspresso. For enterprise governance: Hootsuite Ads or Skai.

What are the best Meta Ads tools for small business?

For SMBs under $3k/month, native Meta Ads Manager plus disciplined creative rotation is usually enough. Add AdCreative.ai when creative production becomes the bottleneck, or AdsGo when you also run Google Ads and need one unified dashboard.

Do I need a third-party Meta Ads tool if I use Advantage+?

Often no — Advantage+ handles delivery decisions well when events are clean and creative supply is steady. Third-party tools earn their seat when creative throughput, cross-platform reporting, or rule-based transparency are the constraint — not when Advantage+ simply needs better inputs.

What is the best Meta Ads automation tool?

Revealbot for transparent, explicit rule-based automation (if-then logic with Slack alerts). Madgicx for denser Meta-native controls. AdsGo for AI-assisted automation across Google and Meta together. The "best" depends on whether you prioritize transparency (Revealbot) or depth (Madgicx).

How should agencies choose Meta Ads tools?

Agencies should optimize for auditability: change logs, approval workflows, and client-readable explanations of every automated action. Revealbot's explicit rules and Madgicx's dense dashboards both offer this. AdsGo is a strong fit when agency clients run both Meta and Google.

When should I NOT use a Meta Ads tool?

When conversion tracking is broken (fix pixel/CAPI first), when your spend is under $1k/month (tooling ROI is marginal), when you already have two optimization systems conflicting, or when your real bottleneck is landing page quality or offer-market fit. No Meta Ads tool fixes bad data or a broken checkout flow.

Is AdsGo only for Meta?

No — AdsGo's clearest differentiation is Google + Meta in one workflow. Meta-only teams can still use it for AI-assisted budget optimization and creative drafts (Drafts & Recom), but its cross-channel value is strongest when both channels are active.

What's the difference between Revealbot and Madgicx?

Revealbot prioritizes explicit, transparent rule-based automation — "if CPA > $X for 3 days, pause." Madgicx offers denser Meta-native tooling with more control surfaces and ML-assisted scaling playbooks. Revealbot wins for transparency-first organizations; Madgicx wins for experienced Meta operators who want maximum levers.